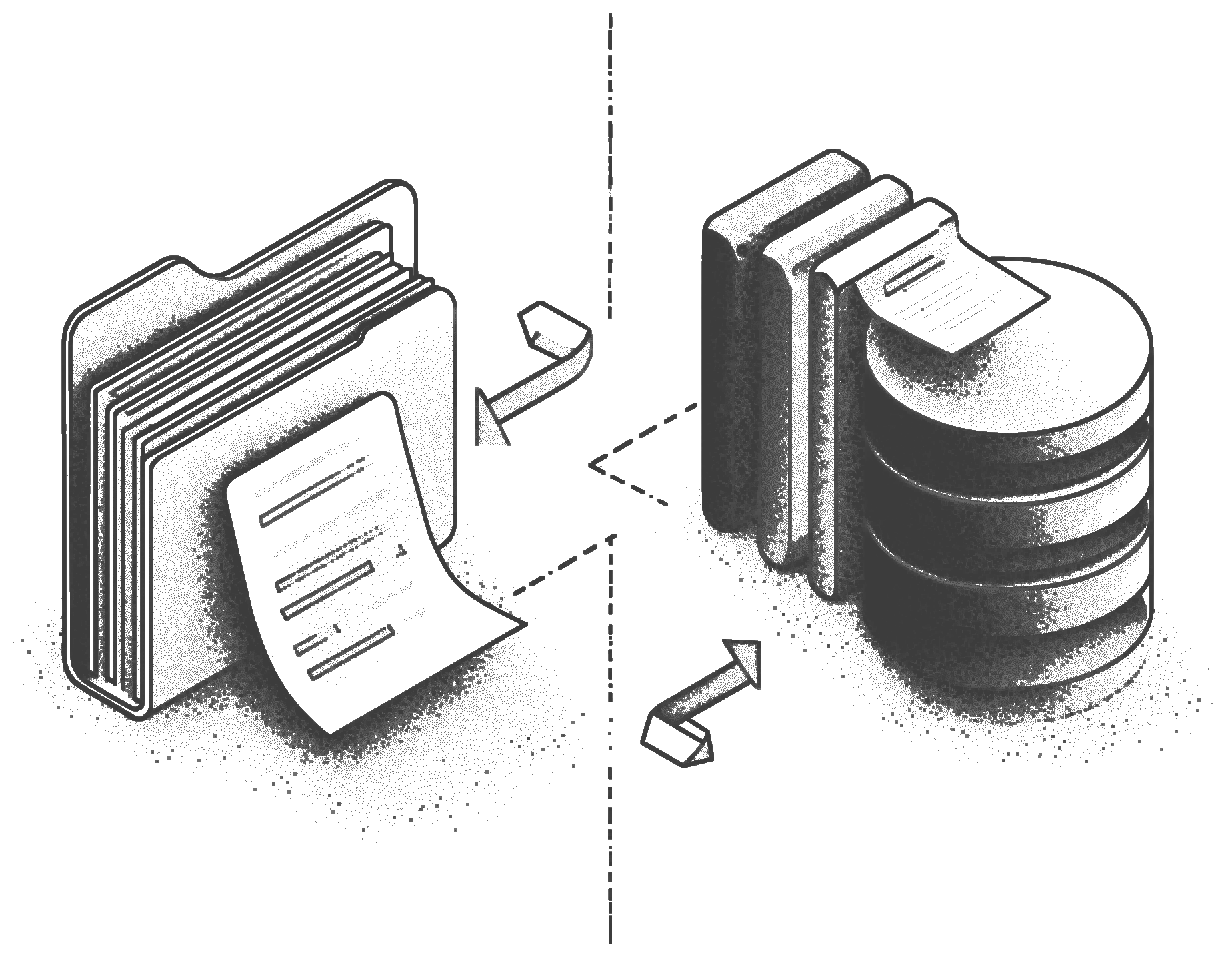

the ease of a filesystem, the semantics of a database.

start with files, get a database for free

Coordinating agents through local files means no transactions, no history, no structure. Git needs pull, push, and merge. S3 has no transactions.

TigerFS gives you the filesystem interface agents already know, with atomic writes and automatic version history. Works with Claude Code, grep, vim, and everything that speaks files.

- instead of local files ACID transactions and version history, not bare files with no coordination guarantees

- instead of git changes are visible immediately; no pull, push, or merge

- instead of s3 structured rows and transactions, not blobs you can only retrieve

treat your data as a filesystem

Reaching into a database usually means a SQL client, a schema you have to remember, and client libraries to pass around. Agents pay that cost on every task.

TigerFS mounts any Postgres database as a directory, with the interface your agents already know mapped directly onto your data. Read rows, filter by index, chain queries into paths.

- instead of a database client your agents already know how to work with files; no client libraries or schemas to pass around

- instead of a separate workflow review and edit row data with vim, cat, diff, and the tools you already use